Google's 2MB Crawl Limit Is Coming for Some of Australia's Biggest Retail Sites

Published: 13 April 2026

Google has a hard limit on how much HTML it will crawl from any single page. Once your page exceeds 2MB, Google stops reading, and whatever sits beyond that threshold doesn't get indexed.

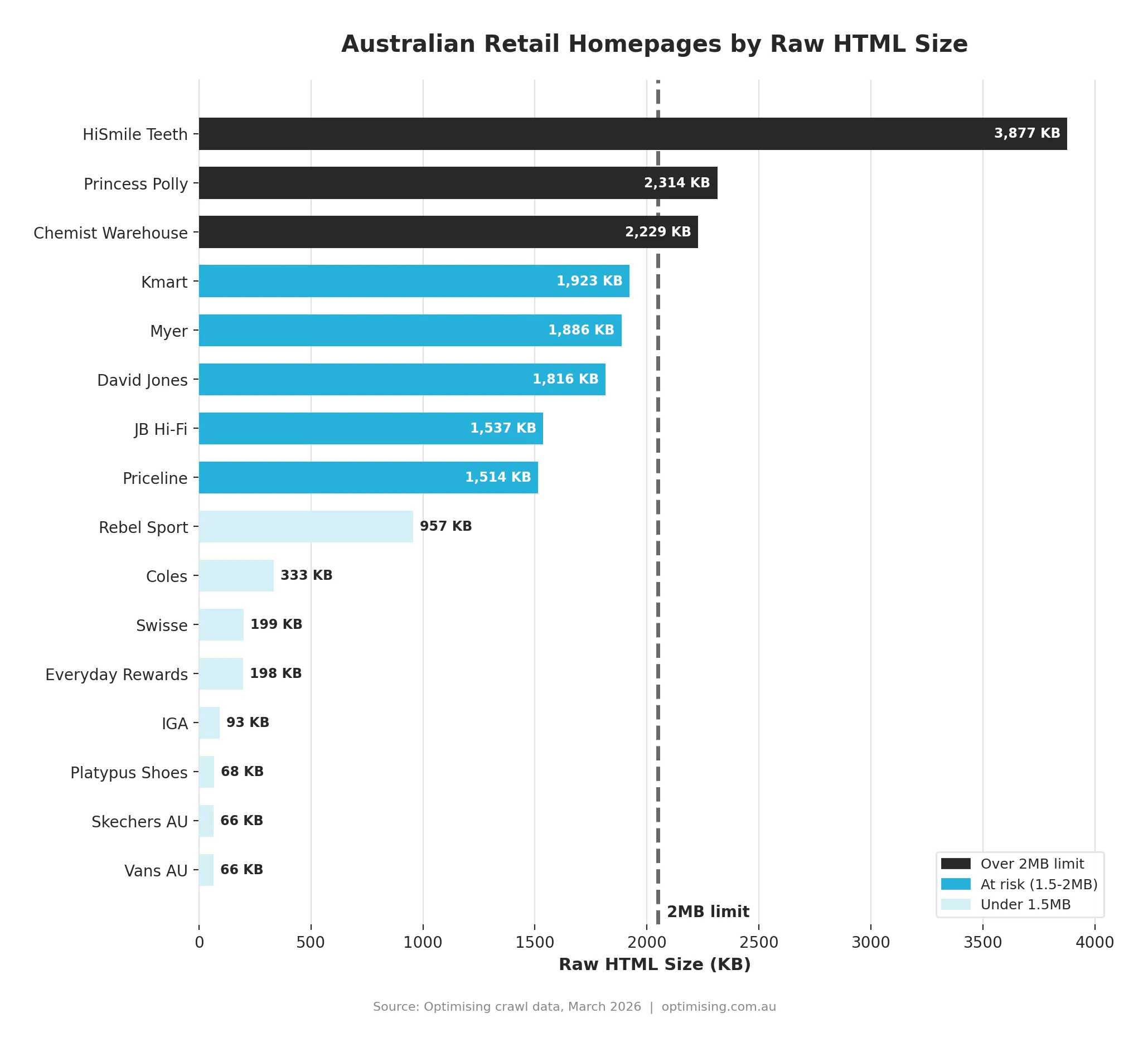

For most websites, that sounds abstract. For a small but significant number of major Australian retailers, it's a real problem. We crawled 35 major Australian retail homepages to find out exactly where they sit. Two sites are already over the limit. Several more are close enough that a single feature launch, campaign update, or theme change could push them across. A handful have the opposite problem: HTML so lean it's nearly empty, because they're letting JavaScript do the work the server should be doing.

The 2MB Limit, Explained

Google's crawler reads the raw HTML your server returns and processes up to 2MB of it. If your page returns 3MB, that last megabyte goes unread. Structured data, navigation, product content sitting in the back half of a bloated document may never be indexed. Not because of a penalty or a robots.txt issue, but because the page is simply too heavy for the crawler to finish.

This isn't a new rule. Google's documentation previously listed a general 15MB crawl limit across all its crawlers, but when they reorganised that documentation in February 2026, they clarified that Googlebot specifically has always operated with a 2MB limit for HTML.

How We Ran This

We ran a raw HTTP crawler, no JavaScript, no headless browser, across 35 major Australian retail homepages. This is exactly what Google's crawler sees before it executes any scripts: raw HTML from the server, nothing more.

We chose homepages because they carry the heaviest load and are crawled most frequently. If a site has a weight problem anywhere, the homepage is usually where it shows up first. This is a straight snapshot of where Australian retail actually sits, from the same vantage point Google has every time it visits.

Sites With a Weight Problem

Two sites in our dataset are already over the 2MB limit.

- HiSmile Teeth: 3,877KB. Nearly double the threshold. Structured data, navigation, product copy: anything past the 2MB mark is invisible to the crawler. For a brand that has built much of its growth on performance marketing and product visibility, a homepage Google can only half-read is a serious structural issue.

- Princess Polly: 2,314KB. Over the limit, but closer to the edge. Roughly 300KB of homepage content that Google doesn't read, which is not a marginal concern for a site competing hard in fashion search. Princess Polly has done genuinely impressive work on international growth, which makes this even more surprising to find.

Both are on Shopify, which is worth noting not as a criticism of the platform, but as a signal of what happens when app scripts, inline JSON, analytics payloads, and theme bloat accumulate without regular auditing. Shopify is an excellent platform. Unaudited Shopify is a different story.

Then there's the at-risk group: Kmart at 1,923KB, Myer at 1,886KB, David Jones at 1,816KB, JB Hi-Fi at 1,537KB. None are over the limit today, but all are within range of a single meaningful change pushing them there. In a market this competitive, invisible technical debt has real consequences.

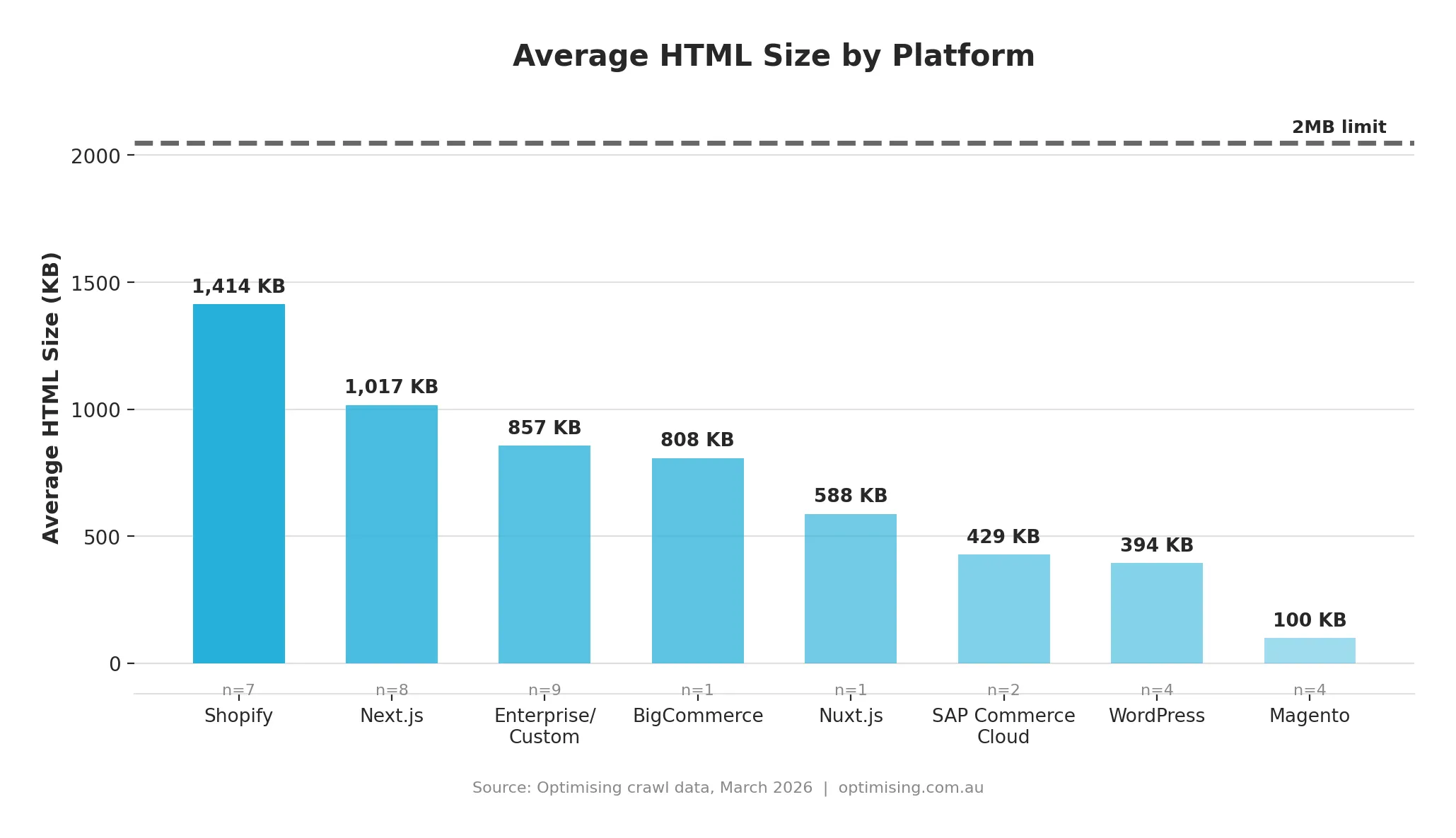

The Platform Picture

Shopify came in heaviest at an average of 1,414KB, followed by Next.js at 1,017KB. But averages mislead here. The ceiling is absolute, and high variance on a high average means a meaningful proportion of sites on those platforms are already in danger.

The 2/7 Shopify sites over 2MB matters more than the platform average. Being on Shopify doesn't protect you from this problem, and if you're running a large Shopify store that hasn't had an HTML weight audit recently, this is your prompt.

Next.js tells an interesting story because the average hides a significant split. Four sites came back under 400KB: Coles at 333KB, Everyday Rewards at 198KB, IGA at 93KB. The other four are all over 900KB. The framework isn't the problem; the implementation is. Two sites can run on identical infrastructure and produce completely different outcomes depending on how carefully the build has been managed over time.

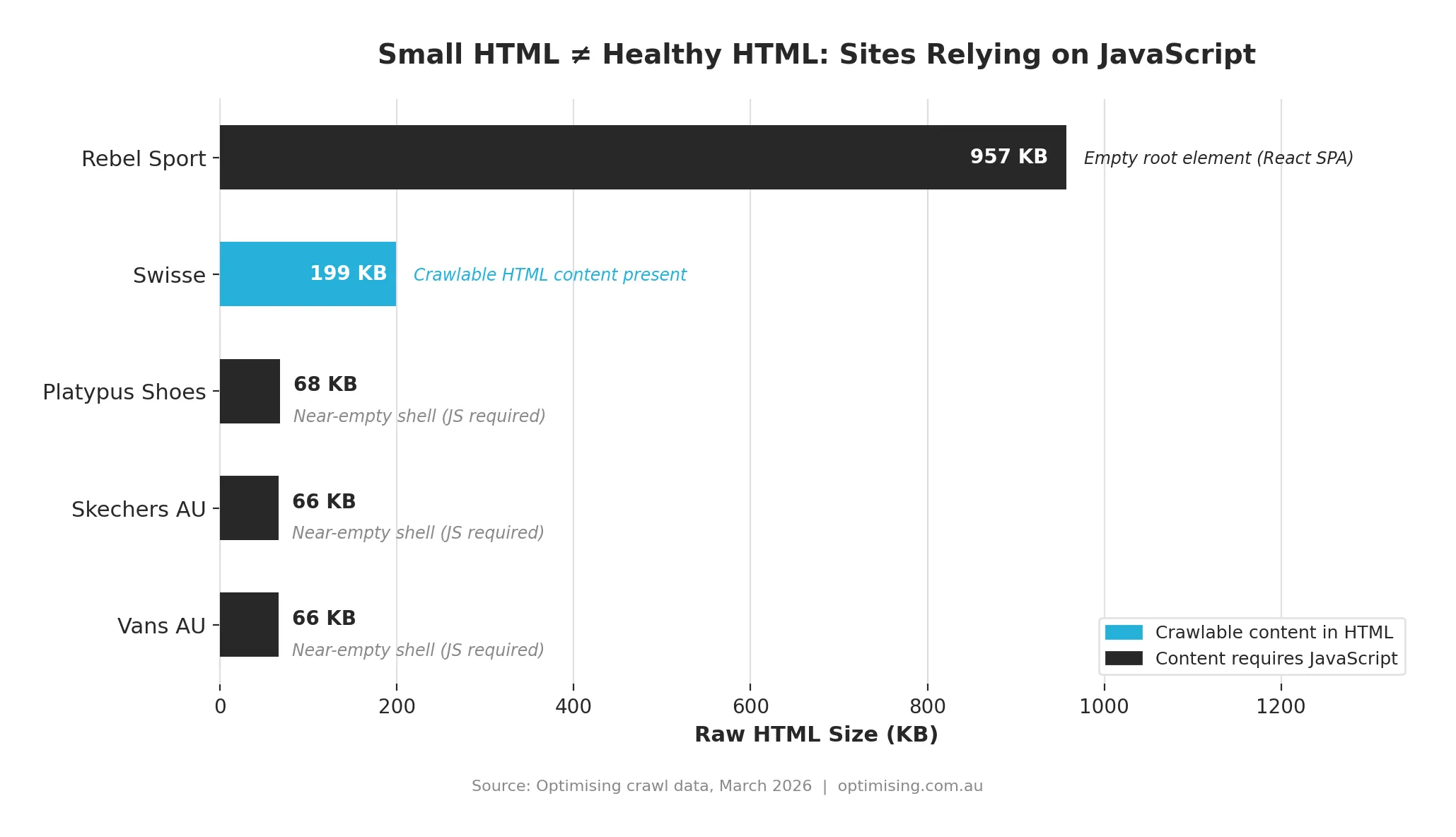

The Different Problem: Sites That Are Nearly Empty

Magento averaged 100KB across four sites, the leanest figure in the dataset by far. That sounds like a great result against a 2MB threshold. It isn't.

Three of the four Magento sites returned near-empty HTML: a shell with a handful of script tags and an empty container. The actual page content, including products, navigation, text, and structured data, only appears after JavaScript executes. Our crawler never saw it, and neither does Google's, at least not reliably.

Rebel Sport had the same issue on a different stack. Their homepage came back at 957KB but the root element was empty: a React SPA delivering its content entirely through JavaScript.

Google can execute JavaScript, but it does so in a second wave that's slower, less reliable, and lower priority than standard HTML crawling. A near-empty page is a different problem to an overweight one, but it's not a better one.

Thin HTML is not the same as efficient HTML, and that distinction matters enormously when you're trying to build organic visibility at scale.

What's Actually Causing the Weight?

When a Shopify or Next.js store comes back at 2MB or more, it's rarely because of just one thing. In our experience auditing large ecommerce sites, the culprits tend to cluster around a few recurring patterns: inline JSON-LD expanded to include full product catalogues; scripts embedded directly in HTML rather than loaded externally; analytics and personalisation payloads writing large data objects into the page server-side; Shopify app scripts compounding quietly across dozens of installs; and theme code carrying conditional logic for features that are no longer active.

Most teams are surprised by their number when they first look at it. Not because the weight appeared overnight, but because it accumulated gradually, one app install and one new script at a time, with no threshold set to trigger a conversation.

HTML weight is nearly always addressable without changing platforms. HiSmile doesn't need to leave Shopify to fix a 3870KB page. They need an audit that identifies what's generating the bulk and a plan to reduce it.

The Practical Test

Open a terminal and run:

`curl -sLo /dev/null -w "%{size_download}" https://yoursite.com | awk '{printf "%.2f MB\n", $1/1048576}'`

The number it returns is your HTML size in bytes. Divide by 1,024 to get kilobytes, divide again to get megabytes. Over 1.5MB means the conversation is worth having. Over 2MB means it's overdue.

For a more complete picture, check the page in Google's URL Inspection tool inside Search Console. It shows you what Googlebot actually received and rendered, which can reveal a meaningful gap between what you think your site looks like and what the crawler sees. We'd also suggest running this across your highest-priority category and product pages, not just the homepage. For large catalogue retailers, deep category pages with expanded filtering and inline reviews can get surprisingly close to the threshold too.

The Brands With the Most to Lose

The sites sitting between 1.5MB and 2MB are in the most precarious position. They're not over the limit today, but the margin of safety is thin enough that a single significant change could cross it. A new analytics tag here, an expanded structured data block there, a seasonal homepage module that never gets removed. Kmart, Myer, David Jones, and JB Hi-Fi are all investing significantly in organic search, and all of them are one homepage update away from a crawlability issue they may not notice for weeks.

There's an inherent tension here that more technical teams need to be honest about. The ambition to deliver a sophisticated homepage experience and the requirement to keep that experience fully indexable are increasingly pulling in opposite directions. In our experience, SEO rarely wins that conversation unless the data is sitting right in front of everyone. Which is partly why we ran this research. The evidence is at the table.

That tension has a solution. It requires knowing your HTML size, knowing what's generating it, and treating it as a metric worth monitoring alongside page speed and Core Web Vitals.

If you don’t know your number, now’s the time to find it, and we’re ready to help you optimise it.

Crawl data collected by the Optimising team across 35 major Australian retail homepages. HTML sizes reflect raw server responses prior to JavaScript execution. Data collected [March, 2026].

James Richardson

Co-Founder

James Richardson is one of the co-founders of Optimising, that he started in 2008. He's spent nearly two decades helping Australian brands get found online, from technical SEO and platform migrations through to AI search.

Outside work, he's usually on the basketball court or golf course or being comprehensively outsmarted by his three daughters.